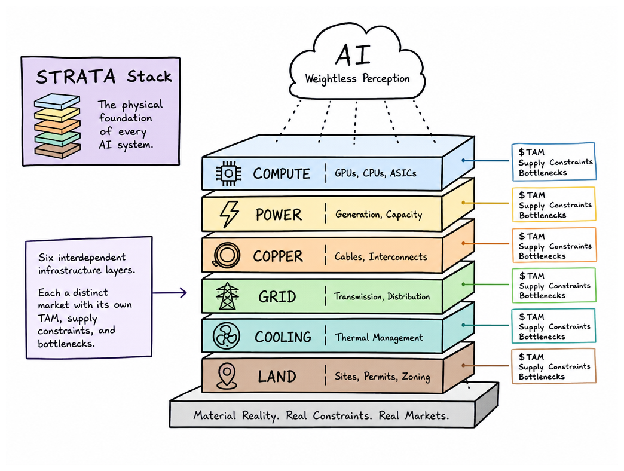

The Illusion of Weightlessness

AI runs in the cloud. It's serverless. Frictionless. Type in a query and the response just appears, like some digital god heard your prayer. Clean. Invisible. Pure information.

Unfortunately, that's a gorgeous lie — and the real reality is the exact opposite. Think about all the steel, concrete, copper, water, and real load that goes into every training run and every inference. Training GPT-3 alone had a carbon footprint of 1,287 megawatt-hours burned and 502 metric tons of CO₂. A single ChatGPT query requires 2.9 watt-hours — 5 to 100x more than a Google search. That's not poetry. That's pressure on the water table, the grid, and real estate transforming into server farms.

The real infrastructure of AI is much heavier than it looks. And it's getting heavier by the second.

A Number That Defies the Cloud Metaphor

Here's the thing about clouds: they're weightless in the cultural imagination. They float. They dissolve. But the capital flowing into AI infrastructure isn't floating anywhere.

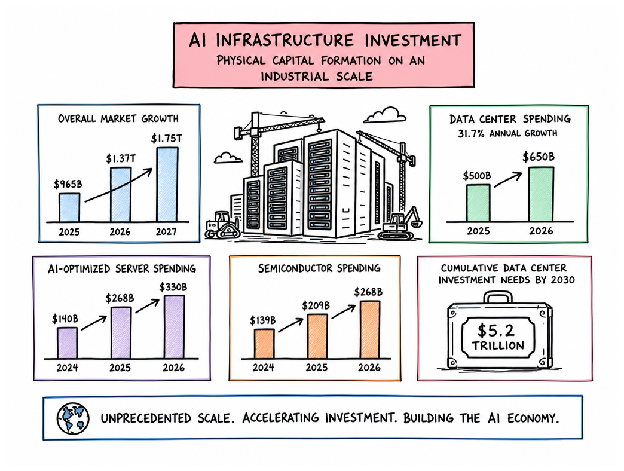

Worldwide AI infrastructure investment is expected to reach $965 billion by 2025, $1.37 trillion by 2026, and $1.75 trillion by 2027. That's not moonshot money. That's not a VC fever dream. That's concrete. Steel. Cooling towers humming in the Nevada desert. That's physical capital formation — the kind of money that moves markets in ways that matter.

Data center spending alone will hit $500B in 2025, then shoot to $650B in 2026 — a 31.7% one-year growth rate. And nobody's hitting the brakes.

| Category | 2024 | 2025 | 2026 |

|---|---|---|---|

| AI-Optimized Servers | $140B | $268B | $330B |

| AI Semiconductors | $139B | $209B | $268B |

Server costs nearly doubled in two years. Semiconductor spending doubled. And to keep up with AI demand by 2030, firms would have to invest a further $5.2 trillion in data centers — not to innovate, just to keep up. This is one of the largest industrial investment stories in living memory. And it's hiding behind silicon.

Layer One — Compute: Silicon Is the New Steel

Semiconductor spending restructures from $139B in 2024 to $268B in 2025, then $330B in 2026. Silicon stopped being a commodity. It's infrastructure now — the bedrock every AI ambition is standing on and praying doesn't crack.

Here's why the stack is fragile: most of it is made in Taiwan. Memory comes from South Korean manufacturers. Other fabs exist in Arizona. That's the whole stack. This geographic concentration creates a structural fragility — one fab fire, one geopolitical tremor, or one yield crisis and the entire STRATA Stack seizes.

Then there's the physics problem nobody signed up for. As chips get smaller, copper interconnects get more resistive. Resistance increases as dimensions shrink — now impacting chip-level performance. You're not fighting market forces. You're fighting physics.

Silicon becomes dead weight without the layer dependencies feeding it:

- High-speed NVMe Storage (the I/O that keeps chips fed)

- Private 5G and Wi-Fi 7 (latency-sensitive networking)

- Ethernet time-sensitive networking (deterministic data movement)

The TAM is still expanding — nowhere near saturation — but the real constraint isn't demand. It's supply. And supply lives in a very small, very concentrated part of the world.

Layer Two — Power: The Grid Was Never Built for This

Silicon is always hungry — but now that hunger is architectural, rooted in the very foundation of computing. The grid has a problem it wasn't built to solve.

- Baseline increase is baked in. Data centers will use 448 TWh in 2025 and are projected to hit 980 TWh in 2030 — a 16% annual growth rate. AI clusters don't breathe. The grid was designed assuming demand does.

- The US is absorbing an outsized share. Data centers used 4.4% of U.S. electricity in 2023 and are expected to triple by 2028. MIT's full delivery cost estimate: data centers could account for up to 21% of global energy demand by 2030. Twenty-one percent. That number breaks the model.

- The problem isn't volume — it's geometry. AI data centers require large, concentrated clusters of 24/7 baseload demand. Not flexible. Not intermittent. Flatline. The existing grid expects valleys and peaks. AI clusters are flatlines. This architectural difference fractures everything downstream.

| Scenario | 2025 | 2030 |

|---|---|---|

| Gartner Baseline | 448 TWh | 980 TWh |

| IEA Base Case | — | 945 TWh |

| MIT (Full Delivery Cost) | — | ~21% global energy |

Nuclear is now a structural solution, not just a nice-to-have. AI data centers require 100 MW of continuous baseload — triggering a wave of nuclear PPAs, the restart of Three Mile Island, and nascent SMR deals. The World Nuclear Association expects uranium demand to rise 28% by 2030 and double by 2040.

Layer Three — Copper: The Metal Running Through Everything

What's really running through a hyperscale data center? It's not just electricity. It's copper — threaded through every inch like pressure through a pipe. Load-bearing, essential, and usually invisible. Until it goes.

One massive data center needs 2,000+ tonnes of copper. That's around 27 tonnes per megawatt of capacity. And that's just one facility. As AI infrastructure explodes, data centers alone could account for 3% of total global copper demand by 2030. Cooling loops expand. Wiring density increases. Copper needs won't go up linearly — they'll go up proportionally.

| Copper's Role | Where It Matters | The Pressure |

|---|---|---|

| Facility wiring | Networking infrastructure, cooling loops, power distribution | Physical scale of hyperscale buildouts |

| Chip interconnects | AI processor architecture, memory hierarchies, GPU stacks | Scaling challenges as transistors shrink |

Here's where it gets rough: mining and refining timelines lag AI deployment timelines by years. The accelerating, vertical demand curve versus the geological, multi-year supply curve. These mines don't care when you deploy training jobs. And this tension — the distance between what you need and what you can get — will become a hard limit to where you build your next facility.

Layers Four & Five — Water, Cooling, and the Hidden Real Estate Market

You also have to cool the chips. Which is the thing nobody talks about when fantasizing about AI clusters. Not an afterthought. Not optional. The thermal problem becomes the entire problem.

The water draw is enormous — approximately 1.7 liters per kWh for cooling, against data center demand projected to hit ~945 TWh by 2030. Every megawatt facility has to solve the thermal constraint or it doesn't run. Cooling becomes the data center. The architecture flips.

| Cooling Technology | Adoption | Constraint Level |

|---|---|---|

| Liquid cooling | Growing | High efficiency, footprint reduction |

| Immersive systems | Emerging | Maintenance challenges, chip compatibility |

| Direct-to-chip | Advanced | Density gains, thermal headroom critical |

And where it gets truly strange: land and water rights are now going toe-to-toe with power and silicon. Specialized real estate operators buy parcels specifically for data centers. Proximity to power substations, available water, specific zoning — attributes that were economically dead five years ago are now driving land value in certain geographies. McKinsey estimates cumulative data center capex includes roughly $0.3 trillion for shell and site, and $0.1 trillion for land acquisition alone. That's not incidental. That's its own market.

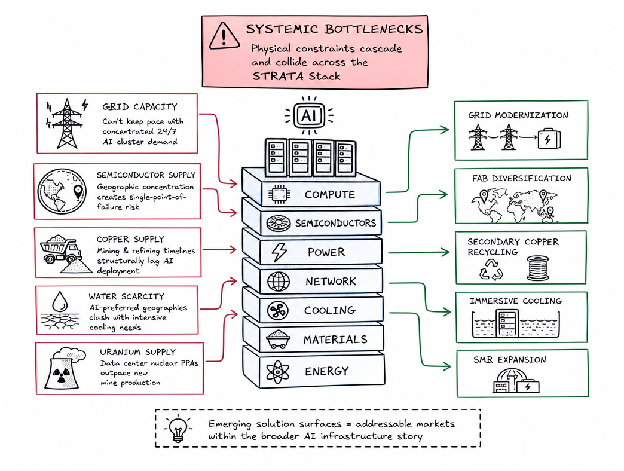

The SAM vs The TAM — Where the Bottlenecks Are

The $2 trillion pie is out in the open. But almost no one is noticing the floor beneath the table.

The STRATA Stack isn't elegant. It's a trench coat filled with five impossible problems — each depending on the others being solved, and each fracturing now:

- Grid collapse in slow motion — AI clusters require round-the-clock, concentrated baseload power that our existing grids were never designed to accommodate. Transmission lines aren't built for these loads. Generation capacity won't catch up for years.

- Semiconductor geographic concentration — South Korea and Taiwan are single points of failure for the entire semiconductor stack. One seizure and silicon availability collapses.

- Copper lag — Copper mining operates on geological timelines while AI deployment happens in quarters. The copper you need doesn't exist yet.

- Water and geography — AI clusters prefer dry climates for cheap power and land. Dry climates are where there's no water. The cooling infrastructure you need is exactly where you can't find it.

- Uranium tightening — Nuclear PPAs are accelerating faster than mines can deliver. This will be a timeline crunch, not a theoretical one.

| Pain Point | Near-Term Valve | Emerging Layer |

|---|---|---|

| Grid | Distributed generation and co-location with power plants | Smart transmission and dynamic load balancing |

| Semiconductors | Fab diversification and advanced packaging | Alternative interconnect materials beyond copper |

| Copper | Alloy substitution and secondary recycling | Wiring density optimization |

| Water | Immersive liquid cooling | Direct-to-chip technologies |

| Uranium | Long-term offtake agreements | SMR fleet expansion |

They're not problems. They're a market. A weird, underpriced, slightly terrifying market that nobody's talking about yet — being priced like footnotes when they should be priced like load-bearing walls.

Telco and Edge: The $35–70B Opportunity in the Middle

Eyes are mostly on hyperscale data centers in the middle of the desert. Fair enough. They're big buildings. Easy to point at. But between now and 2030 there's a layer of the STRATA Stack that could be worth $35–70 billion — and the telcos sit on it.

That's edge compute. The middle layer between mega-clusters and the endpoints where decisions actually get made. The private 5G, 6G, mesh SD-WAN, and time-sensitive Ethernet that lets inference happen near the user instead of bouncing to a hyperscaler's co-lo fortress and back. Telco operators aren't building this from scratch — they're re-purposing existing spectrum, fiber, and towers. Physical infrastructure about to explode in value as edge AI workloads increase. That negligence is exactly the gap where structural value concentrates.

A Snapshot of the TAM by Layer

| Layer | Market Size | The Real Constraint |

|---|---|---|

| Compute | $330B servers + $268B semiconductors [2026] | Silicon fab capacity |

| Data Center Infrastructure | $650B [2026]; $5.2T cumulative by 2030 | Real estate + permitting speed |

| Power (Grid) | ~980 TWh demand by 2030 | Baseload generation |

| Uranium / Nuclear | 28% demand rise by 2030; doubles by 2040 | Mining + enrichment pipeline |

| Copper | 3% of global demand by 2030 | Extraction rates |

| Land, Cooling, Real Estate | ~$0.4T cumulative spend | Zoning + water access |

| Telco & Edge | $35B–$70B by 2030 | Last-mile fiber deployment |

These are not footnotes beneath a forecast for software revenue. They're standalone markets. The copper doesn't care that your transformer was trained on the latest model. Power doesn't care about your API. The grid doesn't care about your inference latency.

When one layer races ahead of the others' ability to supply, it spells dislocation — and things get genuinely ugly. The physical world couldn't care less about a software roadmap. That's where you look. That's where the alpha concentrates.

The Dirt Beneath the Cloud

What is actually holding up the cloud? You've got the numbers — $268 billion here, $5.2 trillion there, copper climbing, uranium accelerating, land parcels vanishing into data center shells. But here's what should be keeping you up at night: AI feels weightless. The code floats. The model trains in some ethereal void.

But the cloud doesn't exist. Just earth. Excavated. Poured. Wired. Fed with enough baseload power that a 20th-century industrialist would cry with jealousy. The stack doesn't just float. It weighs everything.

AI stops being a story about algorithms and starts becoming a story about constraints once you see what the STRATA layers really are: geological extraction with a silicon veneer, not software infrastructure. Power grids, water tables, and ore bodies that weren't built for this — buried in bottlenecks. Someone is digging up the earth for silicon dreams. The market hasn't even started to price what's coming out of the ground yet.

The code is the product. The stack is the price.

The Red Planet Version of STRATA

And then there's the Musk problem. Or opportunity. Depends how caffeinated your imagination is. If launch costs keep falling and off-world industrial logistics stop sounding like science fiction and start sounding like capex, the STRATA Stack could flip from a blue-planet constraint map into a red-planet one.

Same logic. Different gravity well. Compute still needs power. Habitats still need cooling. Robotics still need metals, excavation, transmission, and ridiculous amounts of energy density. Mars doesn't erase the stack — it makes the stack even more naked. No lush grid to lean on. No abundant atmosphere. No hidden slack. Just raw industrial dependency, exposed under a smaller sky.

If that shift ever stops being theater and starts becoming procurement, the market won't just be pricing AI infrastructure on Earth. It'll be pricing the first extraterrestrial version of it too. Which is a fairly insane sentence. And not obviously a wrong one.

When Dharma Decays, Capital Compounds.